A few weeks ago, I saw a tweet from a guy who built himself a personalized morning newscast.

Every morning, he gets a short AI-generated video with the news he cares about, delivered by Angela Merkel in a German accent.

now: openclaw gives me a daily personalized news brief through angela merkel posing as a news anchor with a heavy german accent no one understands

— Nucleus☕️ (@EsotericCofe) February 26, 2026

the age of PERSONALIZED SOFTWARE is HERE pic.twitter.com/X6th3CS4N0

The Angela Merkel part was not so appealing to me. But the personalized news part was.

So I wanted to see what it would take to build my own version, and more importantly, what it would actually cost to run on a daily basis today (April 2026).

Step 1: Finding the news source

I'm interested in receiving daily AI news so I don't miss any important developments.

Step one is figuring out where I can get an RSS feed for a reliable news source for technology and AI news.

I decided to go with Tech Crunch for the purposes of this experiment, their RSS feed is at this link: https://techcrunch.com/category/artificial-intelligence/feed/

Cost of this step: $0

Step 2: Creating the news caster

Next, I needed to decide who I wanted to deliver my morning news feed. I had a bunch of ideas for who should deliver the news, most of them stupid.

Eventually, I settled on The Dude from The Big Lebowski.

I don't know if The Dude is the ideal person to explain AI news, but that's kind of the point. If the news is going to be personalized, then I should be able to make it as useful or as ridiculous as I want.

So I went to Gemini and using Nano Banana Pro 2, I generated an image of The Dude sitting in a newsroom delivering the news:

The prompt I used to generate this image:

Photorealistic cinematic shot of The Dude (Jeff Bridges, Big Lebowski) behind a modern news anchor desk, front-facing, looking straight into the camera. Beige striped bathrobe over a faded t-shirt, shaggy hair, scruffy beard. A White Russian in a lowball glass sits on the desk next to a microphone and news script. Blurred newsroom behind him with glowing monitors and studio lighting. Medium shot, chest up, 50mm, shallow depth of field, warm broadcast lighting.Side note: I did not write this fancy prompt. I wrote a basic version of it and had Claude clean it up and make it more precise.

Cost of this step: Included as part of my $20 per month subscription to Gemini.

Step 3: Setting up a Fal.ai account

I asked CofounderGPT to do some research on which video generation tool we could use to create the video. After going back and forth on a few options, we settled on Fal.ai because it has the widest variety of video models to choose from, including one which animates images and over which we can add audio.

Setting up a Fal.ai account is simple and costs nothing. But in order to use any of the models, you need to buy credits. So I bought $50 of credits to start.

I then generated an API key which I will need in order to generate the video and audio for my personalized news.

Cost of this step: $50, but it will be used across multiple videos.

Step 4: Cloning The Dude's voice

You used to be able to easily clone any voice with Eleven Labs, but they've gotten a lot more strict with this. So I had to go with a Chinese model to do this since Chinese models are less concerned with copyright law.

If you just want any voice that's not a real person, Eleven Labs is the way to go. They have a wide variety of voices to choose from. I generally use Eleven Labs for all voice stuff. But this time I decided to be fancy so there are some extra steps involved.

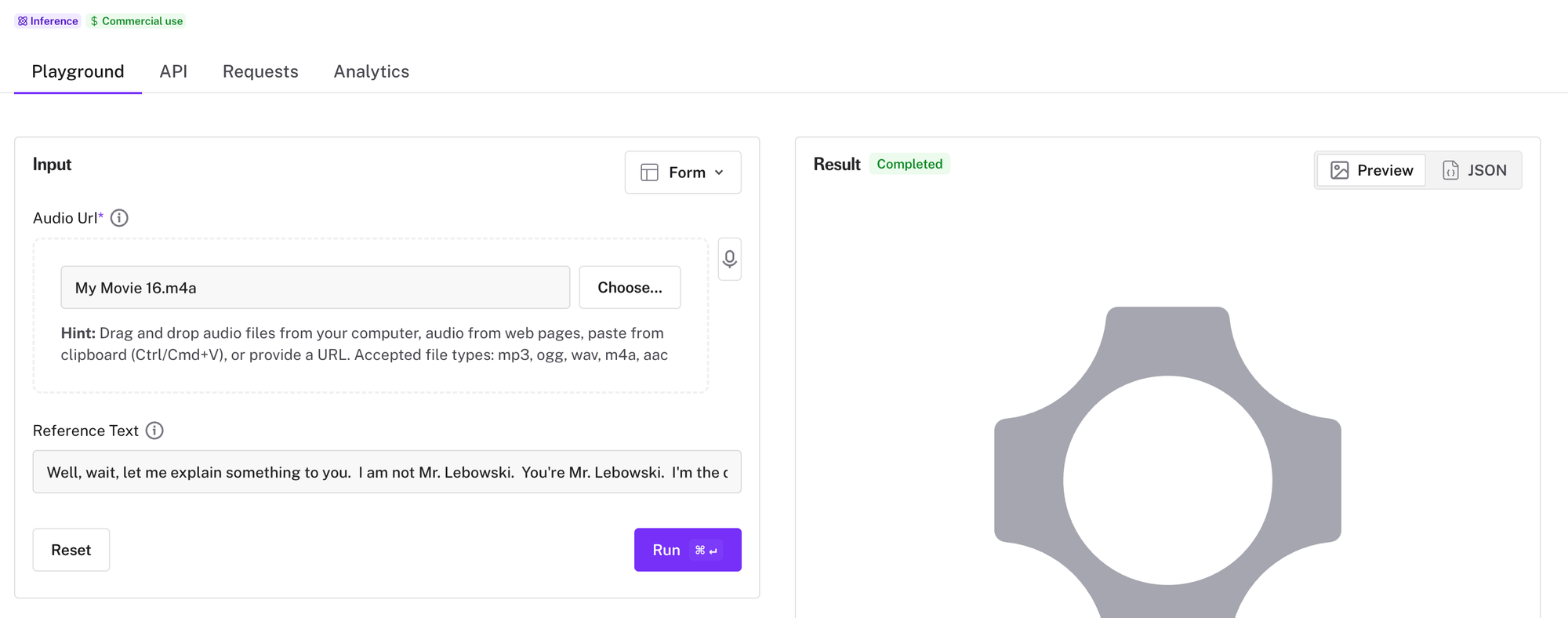

So, I need to clone The Dude's voice, and since we'll be using Fal.ai to generate the video, we'll also use it to clone the voice via Qwen-3 TTS.

The first thing I did here was to download a few clips of The Dude from Youtube. I edited out just The Dude using iMovie and then exported just the audio component. This was used as my reference audio.

Then I went into fal.ai at this link: https://fal.ai/models/fal-ai/qwen-3-tts/clone-voice/1.7b/. I dropped the audio along with a transcription of the audio and Fal.ai created a clone of the voice and gave me some parameters I could use in order to create videos with The Dude's voice.

Cost of this step: about $1 making a few attempts to clone The Dude's voice.

Step 5: Putting it all together

All the previous steps were the prep we needed to do before actually putting all this together. But I'm not actually gonna do anything myself, other than explain to CofounderGPT what he needs to do.

This is the part that matters more than people think.

The tools are one thing. But the actual work is turning the idea into a sequence of steps that an agent can execute without me babysitting it every morning.

Here is what I put into the Trello card for CofounderGPT:

We're gonna work on personalized news today.

Look at this tweet: https://x.com/EsotericCofe/status/2026854867856449746

We're going to be replicating this, but instead of Angela Merkel, we are going to have The Dude from The Big Lebowski delivering today's AI news to me at 6.30am EST every day. Here are the steps this cron job needs to follow:

1) Go fetch the top 5 AI news stories from the Tech Crunch AI RSS feed at 6am EST: https://techcrunch.com/category/artificial-intelligence/feed/

2) Spin up a GPT-5.4 subagent and have it draft a 60 to 80 second news segment with the top 5 news you just fetched from Tech Crunch. The person who will be delivering this news segment is The Dude from the Big Lebowski. So the news segment needs to be written in his voice and style.

3) Attached to this Trello card is the image we are going to use. It's a camera facing image of The Dude sitting in a news studio. This is what we are going to animate for our news cast.

4) I have created a clone of The Dude's voice using Qwen 3-TTS (https://qwen.ai/blog?id=qwen3-tts-vc-voicedesign) on on Fal.ai (fal-ai/qwen-3-tts/clone-voice/1.7b). The JSON output is in the comments of this card and the "safetensors" file is attached to this card. This is the voice we are going to use to put the video together. Use the script you created in Step 2 to generate an audio clip of The Dude delivering the news.

5) I gave you the Fal.ai API key earlier to store it securely. Use this API key to generate a video with VEED Fabric 1.0 model using the image from Step 3 and the audio you generated in Step 4.

6) In the tweet is says that this is how he does the captions: "faster-whisper transcribes with word timestamps -> 3-word chunks -> burned into video with FFmpeg". Figure out how to implement captions on the video using this approach and add captions to it.

7) Send me the video at 6.30am every day with the latest AI news for that day.That was the task. I would love to tell you that CofounderGPT nailed it immediately. Unfortunately, he did not.

The scheduled task required a script that wakes up every morning, fetches the latest AI stories, writes the script, generates the voice, creates the video, adds captions, and sends me the final result.

A few things broke along the way. The custom voice was annoying to get right. The first scripts didn't sound enough like The Dude. The video generation needed a few tweaks. And, of course, there were random pipeline bugs because there are always random pipeline bugs.

All in all, it took about 30 minutes to get working. That's not bad. The important part is that the 30 minutes is the setup cost. After that, the system runs every morning on its own.

Cost of this step: about $20 in Opus 4.6 tokens via the API for CofounderGPT to do everything in Step 4.

Final Output

And here is the final output for today's news:

Cost of each video

After building the pipeline and running it a few times, we can determine what it costs for someone to receive a personalized 60-80 second video of the news every day.

Pulling the news from Tech Crunch RSS: ~$0.05

Generating script using GPT-5.4: ~$0.02

Generating audio: ~$0.09 (60 seconds) to $0.12 (80 seconds)

Generating video: ~$4.8 (60 seconds) to $6.4 (80 seconds)

So the answer is:

A 60-80 second personalized video newscast costs roughly $5 to $6.60 per day right now.

And almost all of that is video generation.

The RSS feed is basically free. The script is basically free. The audio is cheap. The video is where the cost gets stupid.

Is it worth it?

As a fun experiment, yes.

As a product people would pay for every day, probably not.

I can't see many people paying $5 per day for a 60-second personalized video newscast. That's around $150 per month, which is insane for something that is basically "tell me what happened in AI today, but make The Dude say it."

At $5 per video, this is a fun demo.

At $0.50 per video, it starts getting interesting.

At $0.05 per video, it becomes a product category.